M.A. Thesis · Interaction Design · University of Kansas · 2025

SmartSounds

Designing an adaptive biofeedback music experience that turns physiological data into something you can feel — not just track.

Two powerful tools.

Siloed from each other.

Wearable technology has transformed how we understand the body. Millions of people wear devices — Whoop bands, Oura rings, Garmin watches, Apple Watch — that continuously measure heart rate, HRV, respiratory rate, sleep quality, and strain. The data is rich, specific, and deeply personal.

At the same time, music is one of the most well-researched behavioral interventions available. Research consistently shows that music influences cognitive function, physical endurance, emotional regulation, and stress response. The right music at the right moment makes a measurable difference.

But these two tools almost never talk to each other. Wearable data stays locked in dashboards. Music stays behind playlists and mood categories. No platform has built a real-time, physiologically-informed bridge between the two.

Users can track their body, and they can listen to music — but there are almost no experiences that intelligently connect both in real time.

The gap SmartSounds closes

Your wearable knows your body is in recovery mode. Your music app doesn't. SmartSounds reads the signal and plays something that supports where your body actually is — not where your playlist thinks you should be.

The mismatch is

costing performance.

Sub-optimal music pairing during exercise doesn't just feel off — it actively increases perceived strain. When tempo and energy fail to align with a user's physiological state, it works against them. Someone in deep recovery listening to high-intensity EDM isn't getting support. They're getting interference.

Problem 01

Mismatch Between Music and Body State

Music tempo too fast or slow for the user's physiological state disrupts rhythm rather than enhancing it — reducing endurance or focus instead of supporting it.

Problem 02

Overstimulation During Recovery

High-intensity music during recovery windows increases stress rather than reducing it. The body needs parasympathetic activation. The wrong sound profile blocks it.

Problem 03

Cognitive Distraction, Not Enhancement

Background music meant to improve focus during work or study often backfires when chosen by mood rather than calibrated to cognitive load.

Problem 04

Decision Fatigue in Music Selection

Choosing the right playlist before a workout, focus session, or sleep takes mental effort. Most users default to the same playlists — or spend too long deciding.

The Design Opportunity

Create a system that transforms biometric signals into adaptive music interactions — helping users feel more in control of their physical and emotional state, without requiring them to understand the underlying physiology. The data already exists. The experience just hasn't been designed yet.

- How might music respond to a user's physiological state in a way that feels natural rather than clinical?

- How might wearable biofeedback become more actionable through adaptive audio?

- How might we reduce decision fatigue when choosing music for workouts, focus, or recovery?

- How might adaptive music feel supportive rather than intrusive or over-automated?

The science behind

adaptive sound.

The research foundation spans music psychology, wearable tech ecosystems, biofeedback systems, exercise science, and behavioral design. A literature review of 20+ peer-reviewed studies was combined with competitive analysis of 8 platforms and a user survey of 27 participants aged 25–34.

The research confirmed that music and physiology are deeply entangled. Fast-tempo music (120–140 BPM) elevates heart rate and enhances high-intensity performance. Slower tempos improve recovery and reduce tension during rest. Music synchronized with heart rate measurably reduces perceived exertion — the same workout feels easier.

Behavioral Clustering — 3 User Archetypes

| Feature | ● Low Eng. | ● Casual | ● High Int. |

|---|---|---|---|

| Real-time adaptation | Not expected | Optional | Critical |

| Mood-based playlists | Gentle intro | Appealing | Helpful |

| Wearable integration | Not necessary | Optional | Core Feature |

| Stress recovery mode | Strong Fit | Strong Fit | Supplementary |

| Social features | Neutral | Optional | Encouraged |

HRV Trend — Training Day

Users Already Self-Medicate with Music

People intuitively use music to regulate mood, energy, and performance — they just do the matching work manually, every single time.

Wearable Data Isn't Actionable Enough

Wearables provide useful signals, but translating them into daily behavioral decisions still requires significant user effort and biometric literacy.

Personalization ≠ Physiological Responsiveness

Spotify personalizes based on taste history. State-based and taste-based personalization are fundamentally different problems requiring different logic.

Trust & Control Are Non-Negotiable

Survey participants responded positively to adaptive recommendations — but only when they understood why and felt able to override the system.

What users said

Sometimes music doesn't match my current activity — like sad or slow music while lifting weights.

— Survey Participant, Age 28

I use shuffle but the playlist doesn't provide the mood I want. Choosing what songs to run to takes too long.

— Survey Participant, Age 31

It would be nice if music picked up on the tempo of my yoga practice and created a flow-based playlist automatically.

— Survey Participant, Age 26

Three distinct

relationships with music.

Survey data clustered into three behavioral archetypes — based on music habits, wearable engagement, and tolerance for automation. Each maps to different feature priorities and interaction patterns.

Riley

Software Engineer · Runner · Chicago

Mindset

Treats exercise as precision work. Wants optimization over inspiration. Views biometric data as a competitive edge.

Pain Points

Managing multiple apps and data sources. No connection between what she hears and what her body is doing at each HR zone.

Needs from SmartSounds

BPM alignment with HR zones. Automatic mode switching at intensity thresholds. Post-session audio impact analytics.

Alex

Marketing Manager · Cyclist · Denver

Mindset

Active but not obsessive about metrics. Wants personalization, but only if it's effortless and completely frictionless.

Pain Points

Bored of the same playlists. Finds existing fitness apps too data-focused, not vibe-focused.

Needs from SmartSounds

Mood-based entry points. Adaptive suggestions that feel relevant. Simple controls with enough transparency to build trust.

Jordan

Freelance Designer · San Francisco

Mindset

Music is emotional support, not a performance tool. Values simplicity. Low trust in adaptive technology.

Pain Points

Complex features feel intimidating. Often skips wellness tools because they feel like another task to manage.

Needs from SmartSounds

Zero-configuration entry. Plain language. Stress recovery mode as primary value — no wearable required to begin.

From signal

to sound.

SmartSounds reads biometric signals from wearables, interprets the user's physiological state, maps that state to an appropriate audio response, and delivers it with enough transparency that users understand why — and enough control that they always feel in charge.

The goal was never to display more data. It was to make the data disappear into the experience — felt, not read.

Biometric Inputs

Heart Rate (HR)Heart Rate Variability (HRV)Stress IndicatorsRespiratory RateSleep / Recovery StateTime of DayProcessing Layer

AI DJ — Flow & TransitionsBiofeedback AnalyzerMood ClassifierAdaptive Audio ControllerUser Context

Activity TypeMood SelectionWearable ConnectionMusic PreferencesSound Output

Adaptive PlaylistsDynamic BPM ShiftsAI SoundscapesBinaural BeatsRecovery SoundscapesWhat the product

actually does.

Feature prioritization was structured around MVP necessity versus future opportunity. Every feature was evaluated against one question: does this reduce friction, or add it?

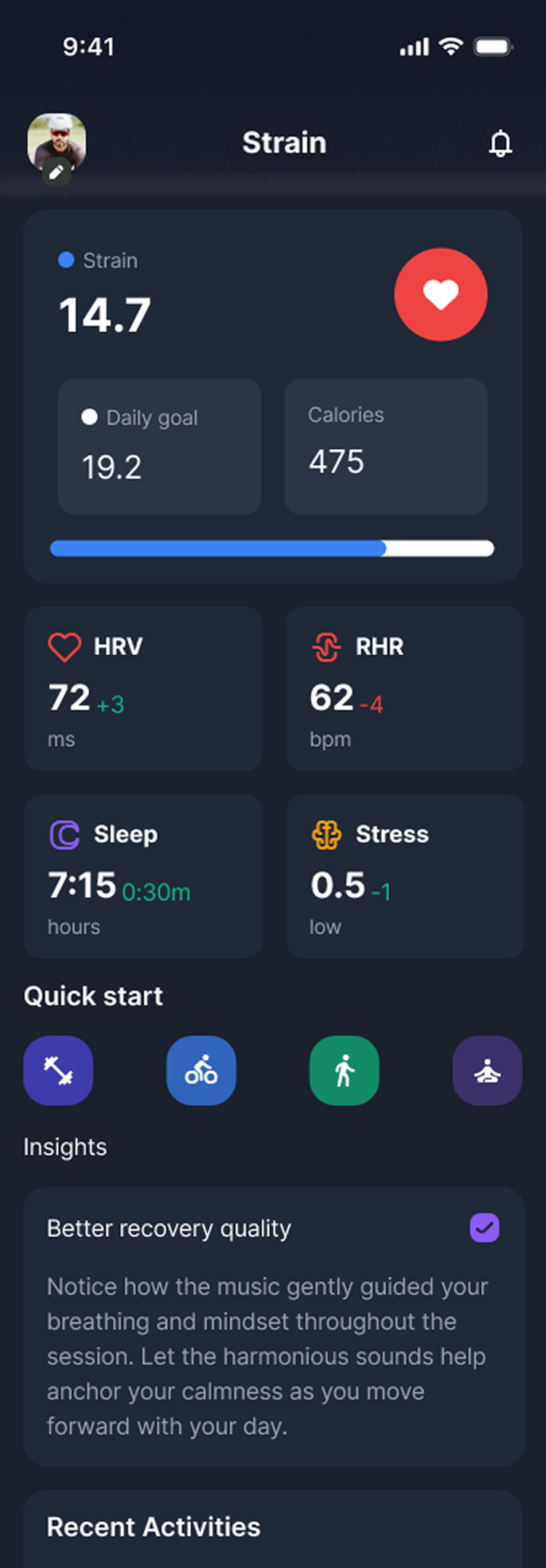

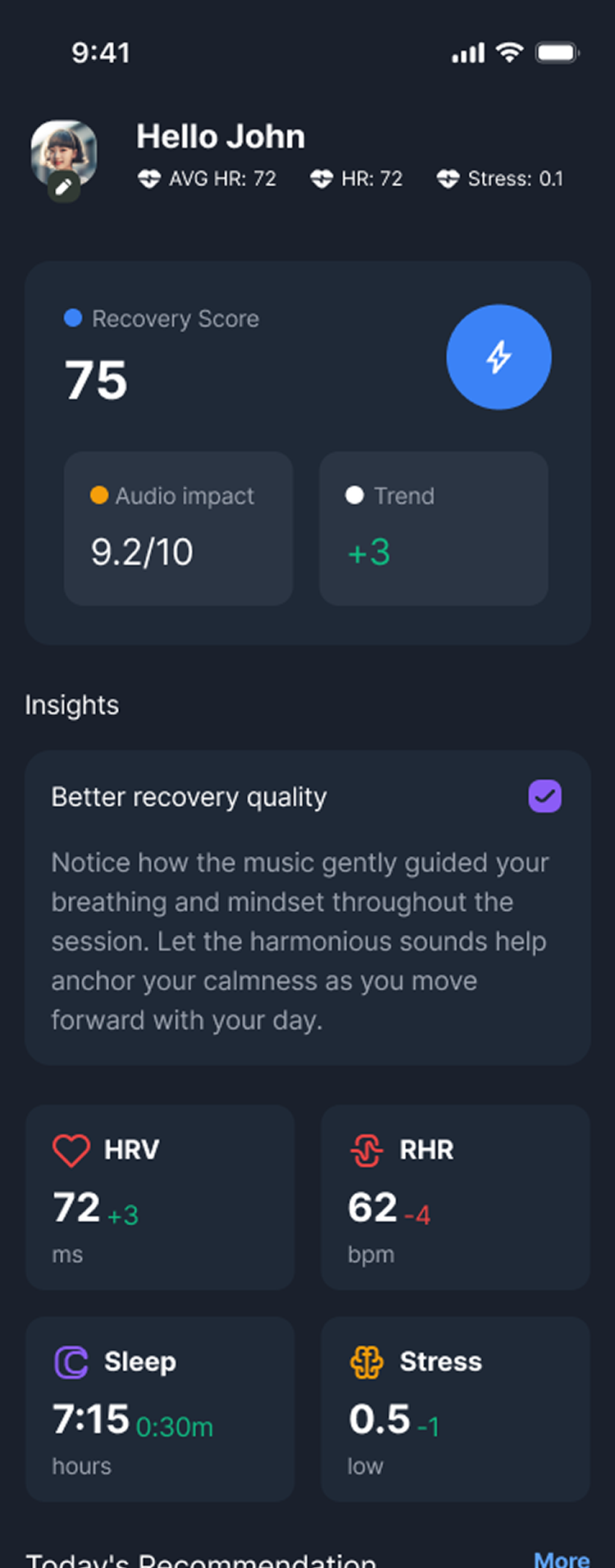

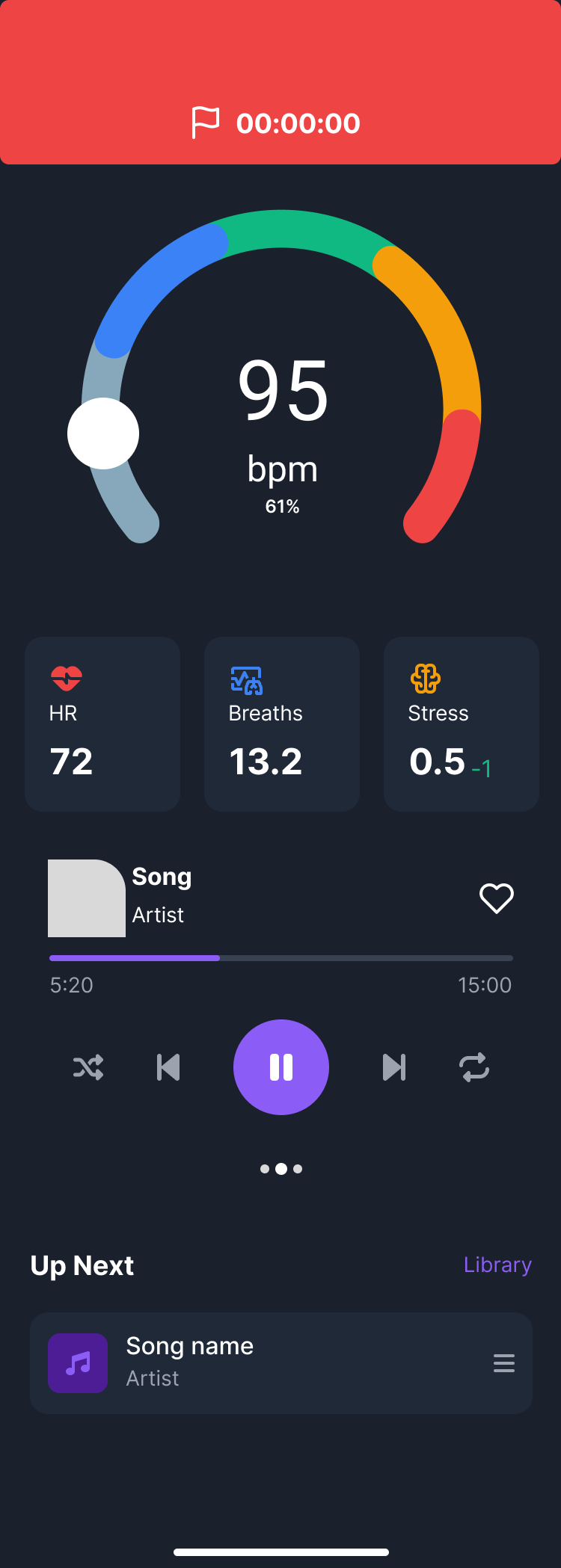

Adaptive Music Modes

Strain, Recovery, Sleep, Focus — each with unique audio logic and biometric trigger thresholds calibrated to different physiological states.

Biometric-Informed Recommendations

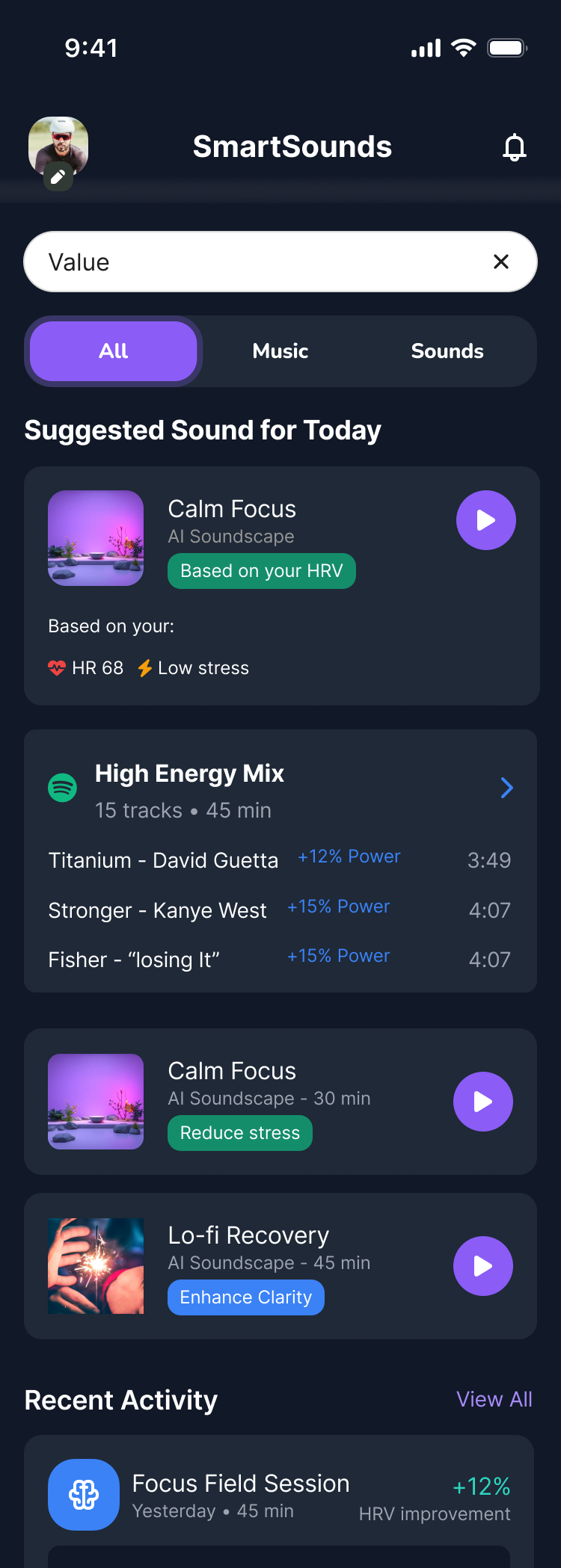

Real-time suggestions based on HRV, heart rate, stress level, and recovery score — not just taste history.

Transparent Reasoning

"Based on your: HR 68 · Low stress" — every recommendation shows users exactly why it was made, building trust through legibility.

Dynamic BPM Matching

Music tempo adjusts in real time to match exercise intensity, transitioning fluidly as heart rate zones shift during a session.

User Control Settings

Adjustable automation level — full auto, semi-auto, or suggestion-only — so users can dial in exactly how much the system decides for them.

Post-Session Audio Impact Analysis

After each session: HRV trend, deep sleep improvement, perceived exertion — tied directly to what was playing during the session.

Wearable Integration Layer

Connects to Garmin, Whoop, Oura, Apple Health, and Fitbit via API — no manual input required once connected.

AI Soundscapes + Spotify Integration

Dual-output: AI-generated ambient soundscapes for deep states, or curated Spotify playlists when users prefer familiar music.

Double Diamond,

end to end.

The project followed the Double Diamond framework — Discover, Define, Develop, Deliver — moving from broad research and problem framing through system design and high-fidelity prototyping.

Discover — Literature Review & Market Analysis

Deep literature review spanning music psychology, biofeedback systems, HRV science, and wearable tech. Competitive analysis of 8 platforms. User survey with 27 participants provided primary validation signal.

Define — Personas, Pain Points & Journey Mapping

Survey data clustered into three behavioral archetypes. Journey maps built across four core scenarios — Strain, Recovery, Sleep, Focus — identifying pain points, opportunity moments, and emotional states at each phase.

Develop — IA, User Flows & Component System

Information architecture and user flows for each mode. Component library built from scratch — biometric displays, music player controls, insight panels, recommendation cards. Low-fi wireframes iterated before moving to hi-fi.

Deliver — Hi-Fi Prototype, Usability Testing & Iteration

High-fidelity Figma prototype with interactive flows across all four modes, full onboarding, and the adaptive audio data flow. Scenario-based usability testing with before/after refinements based on cognitive load and navigation feedback.

Early wireframes and sketches

Calm intelligence.

Not clinical complexity.

The final interface is built on a single governing principle: this should feel like a thoughtful companion, not a biometric dashboard. Dark, composed, designed to be used at rest, during movement, and before sleep — without adding cognitive load.

- State-based home screen — reconfigures based on time of day and wearable signals. What's shown at 6AM post-run differs from 10PM pre-sleep.

- Plain language, not raw numbers. "Your body needs recovery" rather than "HRV: 42ms below baseline."

- Transparent recommendation cards showing the biometric reasoning behind every audio suggestion — building trust without demanding literacy.

- Onboarding under 3 minutes with optional depth for power users who want to configure wearables and automation level.

- Post-session insights that connect listening behavior to measurable physiological outcomes — closing the feedback loop most platforms leave open.

Tested against

real scenarios.

Validation through scenario-based usability testing across six core tasks. Qualitative feedback measured desirability, clarity, trust, perceived value, and willingness to use over time.

Wearable Data Feels More Useful

Participants noted SmartSounds made their biometric data feel actionable for the first time — not just informational.

Transparency Builds Trust

Adaptive recommendations were well-received when accompanied by reasoning. Users responded negatively to suggestions without context.

Control Matters as Much as Automation

Users wanted the system to help, not decide. The ability to override or pause automation was cited as essential to sustained use.

Context-Specific Modes Resonated Strongly

Users strongly preferred modes tied to specific contexts — Focus, Strain, Recovery — over generic categories like "mood."

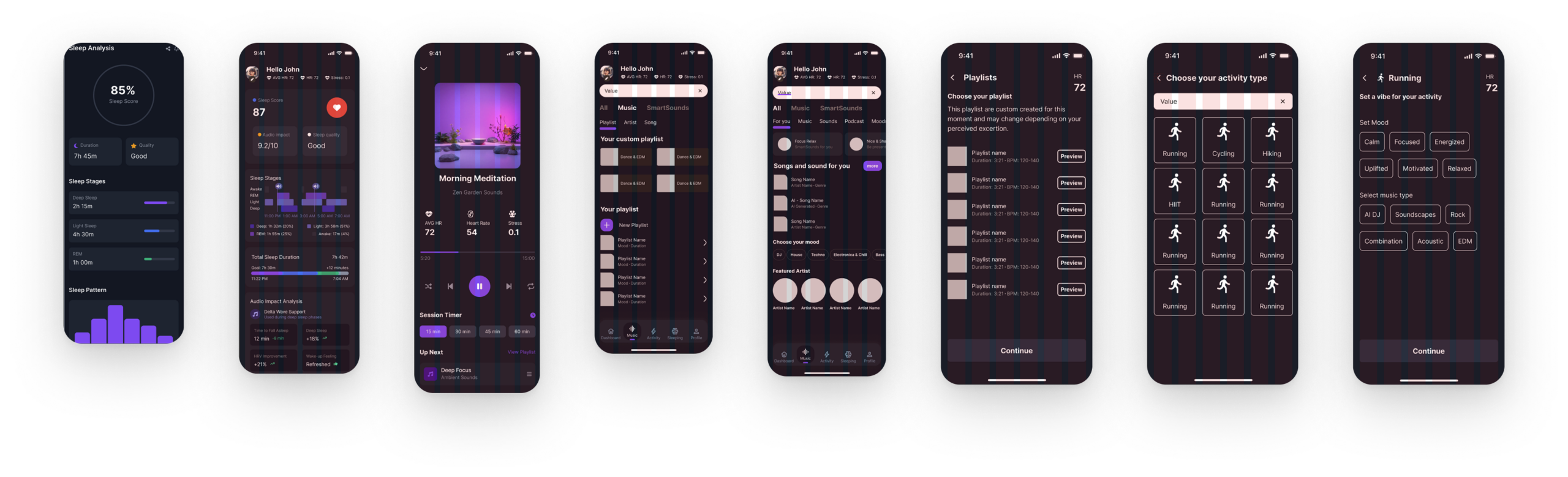

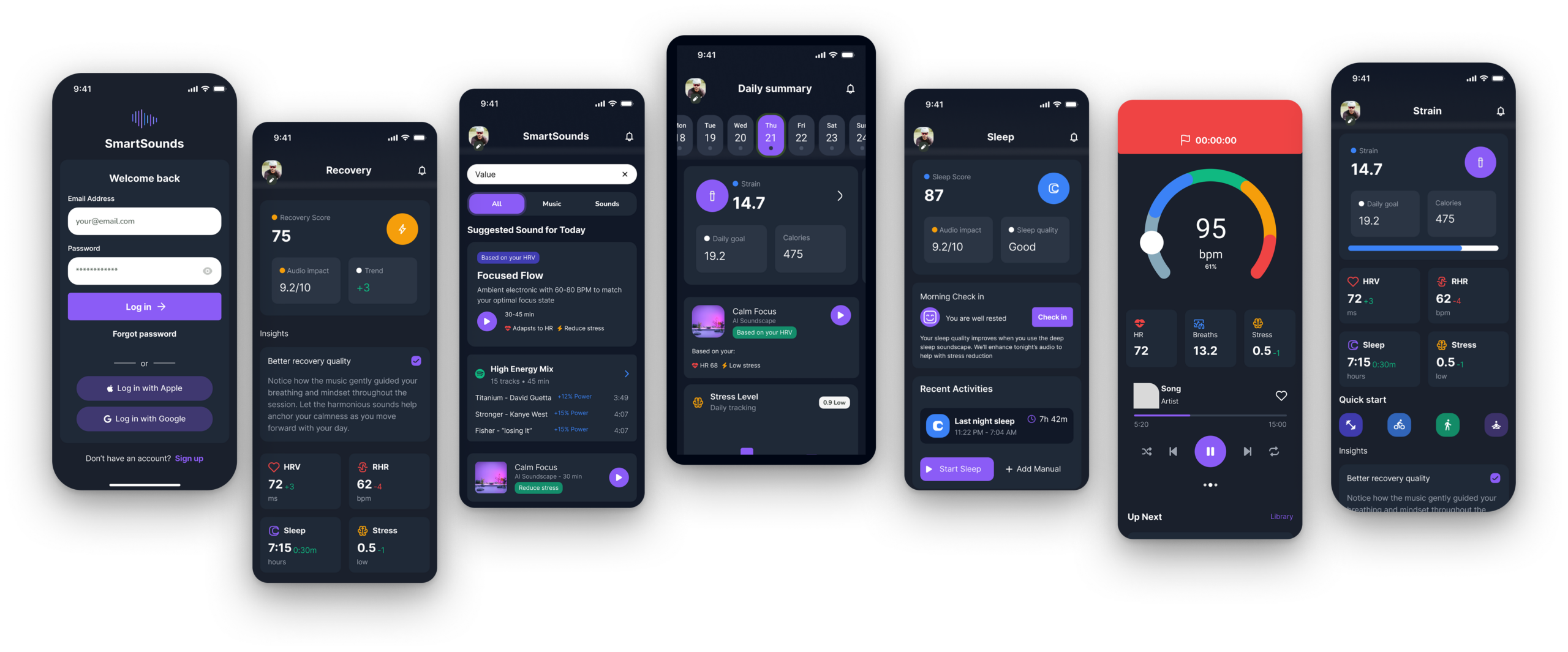

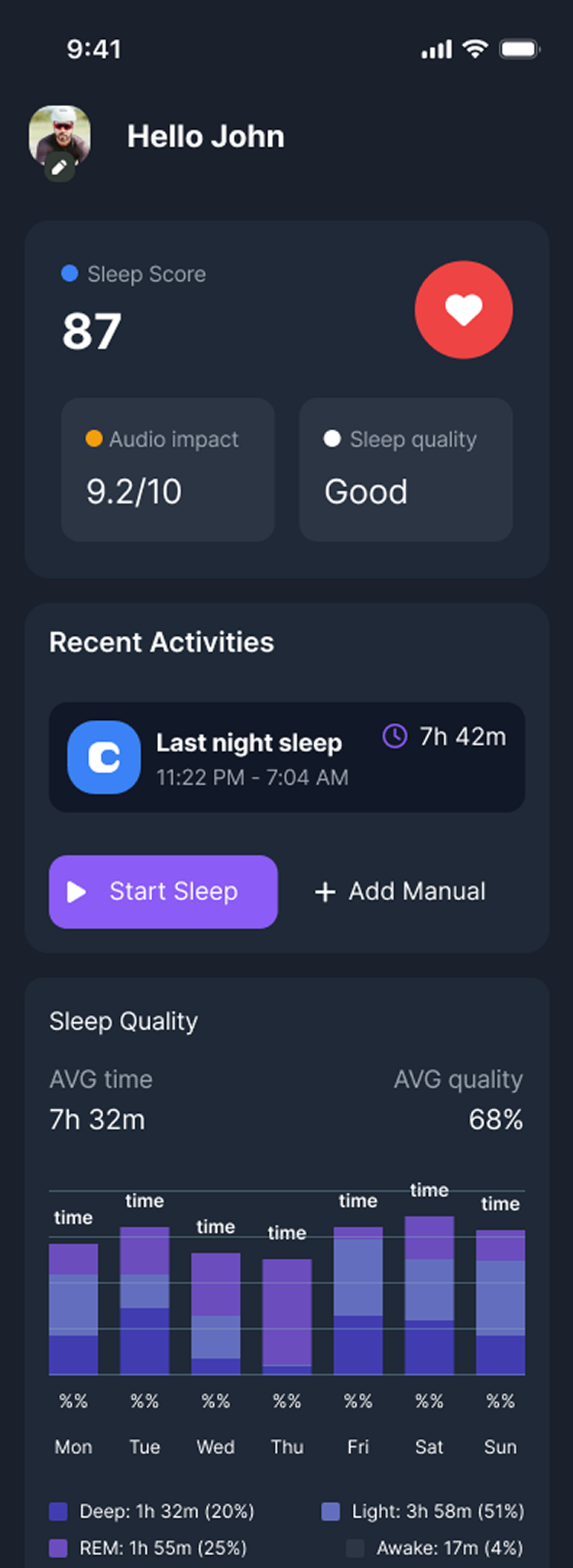

Final prototype screens

High-fidelity Figma prototype across four core modes — Strain, Recovery, Sleep, and Focus.

SmartSounds — Full interface overview

Strain mode

Recovery mode

Sleep mode

Onboarding

Before / after usability iterations

What this project

established.

SmartSounds demonstrated that the intersection of wearable technology and adaptive media is not only feasible as a product category — it's genuinely wanted by the people who would use it.

Distinct user archetypes defined and validated through behavioral clustering analysis

Core experience modes with full user flows, journey maps, and hi-fi interface screens

Competing platforms analyzed to map the white space this concept occupies in the market

- Clarified a viable product opportunity at the intersection of wearables and adaptive media — a space no current product fully occupies.

- Demonstrated how biometric signals can become human-centered interactions that feel supportive rather than surveillance-like.

- Developed a scalable concept architecture extending across wellness, sport, and cognitive performance contexts.

- Established a design framework — biometric input → state interpretation → transparent recommendation → user control — applicable beyond music to other adaptive health experiences.

What designing at the

edge of technology teaches.

Designing for emerging technology is an exercise in restraint. The technical possibilities of biofeedback-informed experiences are vast — the design challenge is deciding what not to do. Users don't want to feel monitored. They want to feel supported. That distinction shapes every interface decision.

Users don't want more data. They want for that data to disappear into an experience that quietly improves their life without requiring them to think about it.

- Adaptive systems must remain explainable. Every automated decision creates a moment of either trust or suspicion. The design work is in building the former.

- Control is a feature, not a failure mode. Giving users the ability to override adaptive logic doesn't undermine the system — it makes people willing to use it at all.

- The UX challenge in health tech is translation, not display. The gap isn't between data and dashboards. It's between data and meaning.

- Music is a uniquely powerful intervention layer. Already embedded in daily life, already used for state regulation — making it a rare candidate for adaptive personalization users will actually accept.

- The future of responsive interfaces is context-aware. The next generation of wellness tech won't respond to what you click. It will respond to how your body is actually doing.